The

Translation

Gap

What AI gets wrong about your practice. A structured, multi-engine audit of three therapy clinics in Titusville, FL — run across ChatGPT, Perplexity, Claude, Copilot, and Gemini on the same day, against the same query.

Even When AI Cites You Correctly,

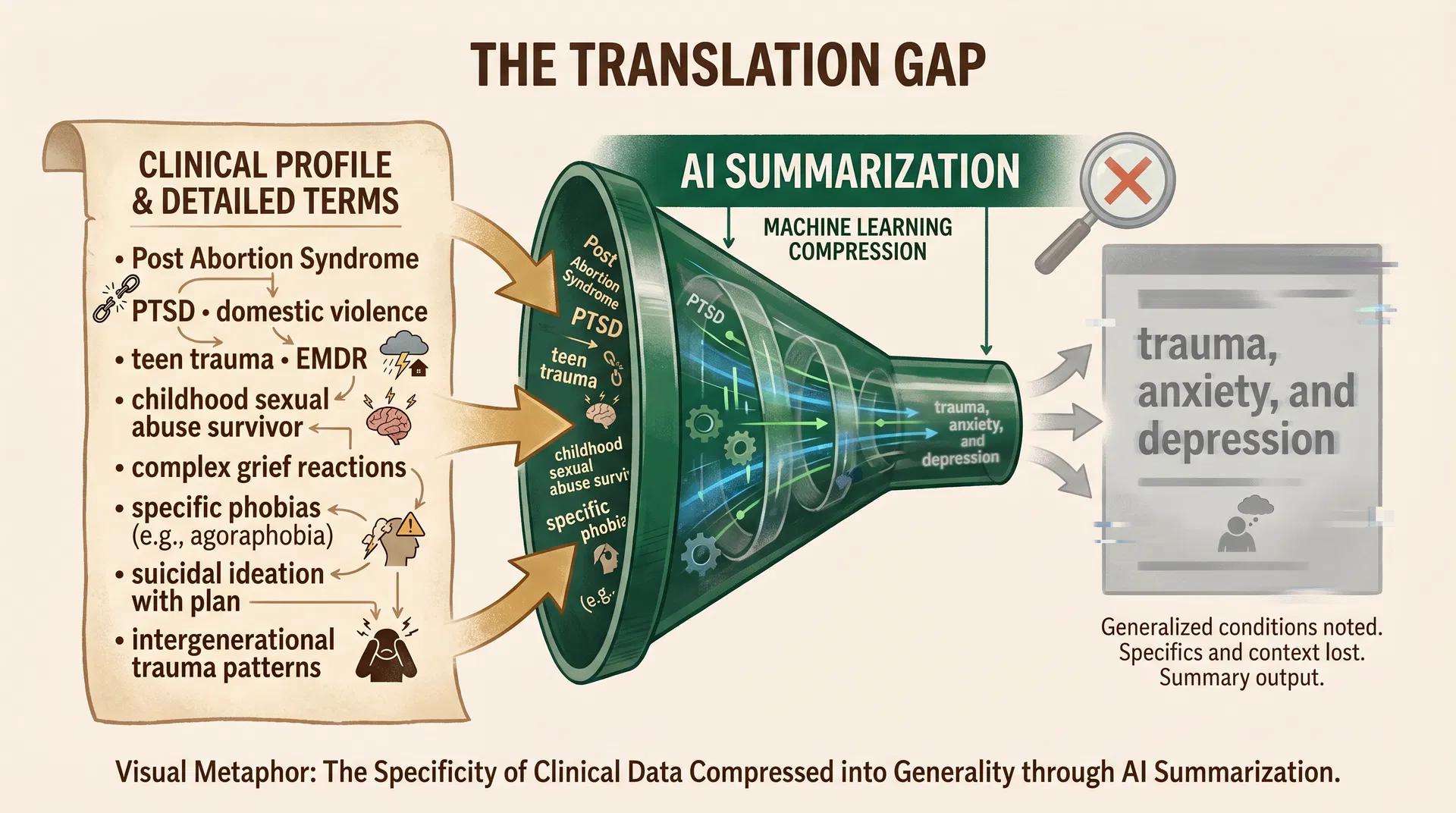

It Describes You Wrong

Absolute Victory Counseling — the clinic winning the directory game — specializes in PTSD from domestic violence and Post Abortion Syndrome (PAS). Her Psychology Today profile says so in plain English.

"I specialize in Post-Traumatic Stress Disorder (PTSD) as a result of domestic violence and Post Abortion Syndrome (PAS) from having an abortion or being a part of the abortion process in some way."

"Focuses on trauma, anxiety, and depression."

That description is not wrong. It is also not right. A woman looking for a therapist who understands post-abortion grief will not find Sylvia Dorsey in that sentence.

"The expertise is real. The translation is failing."

The AI summarization funnel: fifteen years of clinical specificity compressed into three generic words. The machine isn't making a judgment about quality — it's making a judgment about legibility.

A beautiful website does not guarantee AI visibility.

Having no website does not prevent it. Your visibility depends on where your expertise lives and whether the specific engines your families use can read that location.

Being cited is not the same as being described accurately.

The clinic winning this test is still being flattened into 'trauma, anxiety, and depression' when her work is specific to two narrow presentations.

You cannot audit this yourself by asking AI.

The tool lies, then corrects itself in the opposite direction, and sounds confident both times. You need raw outputs from multiple engines, captured on the same day.

Five Engines, Three Clinics,

Wildly Different Results

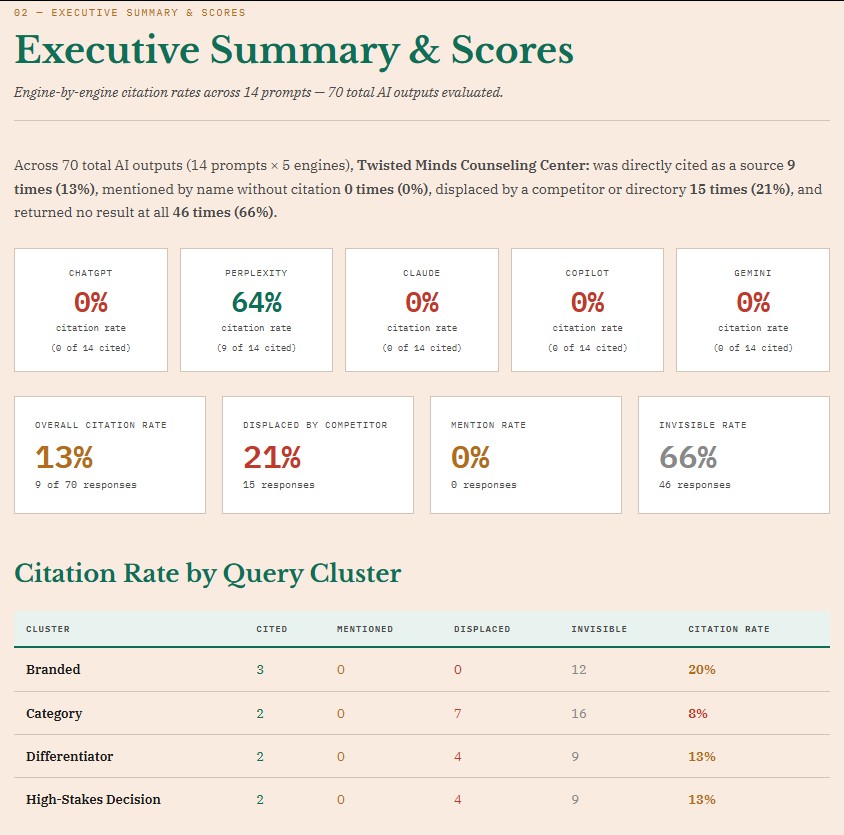

The idea that "AI visibility" is one score is wrong. It's at least five scores, and your expertise can be invisible on half of them while being perfectly findable on the other half. Select a clinic below to explore its engine-by-engine performance.

Cited 9 of 70 times (13%), but only by Perplexity. Invisible to ChatGPT, Claude, Copilot, and Gemini entirely.

The Translation Gap in Action:

Raw AI Audit Data

Every image below is a direct artifact from the audit — unedited outputs, raw score reports, and annotated screenshots. Filter by category or click any image to examine it in full.

Sources & Methodology

Case study from structured multi-engine audit of three Titusville, FL therapy practices conducted April 22, 2026.

Twisted Minds — Executive Summary

Twisted Minds13% overall citation rate. 66% invisible rate. 9 of 70 AI outputs cited the clinic — all from Perplexity.

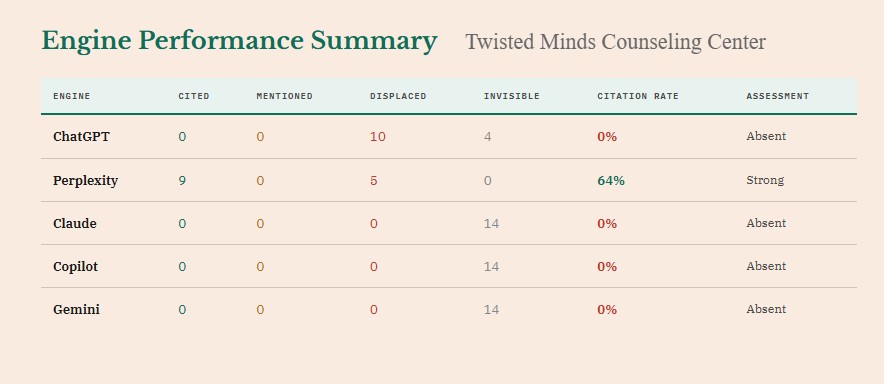

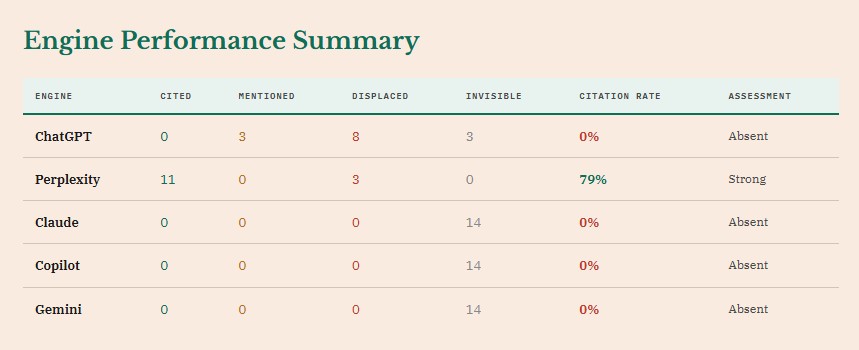

Twisted Minds — Engine Performance

Twisted MindsPerplexity: 64% citation rate. ChatGPT, Claude, Copilot, Gemini: 0%. The engine you win depends entirely on where your presence lives.

Miracle City — Executive Summary

Miracle City16% overall citation rate. 64% invisible rate. The only clinic with any mention activity (4%).

Miracle City — Engine Performance

Miracle CityPerplexity: 79% citation rate — the highest of any clinic. ChatGPT, Claude, Copilot, Gemini: 0%.

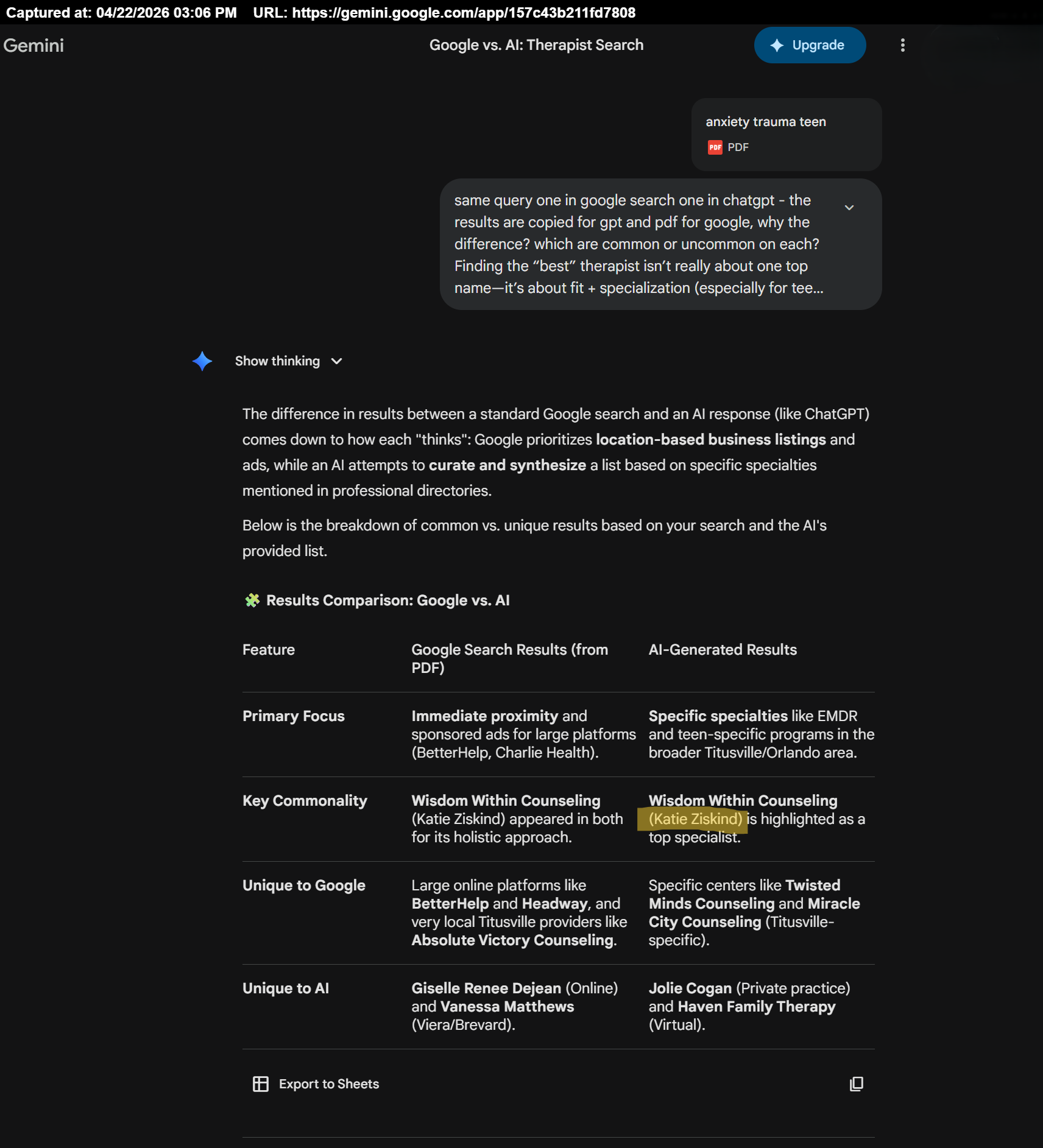

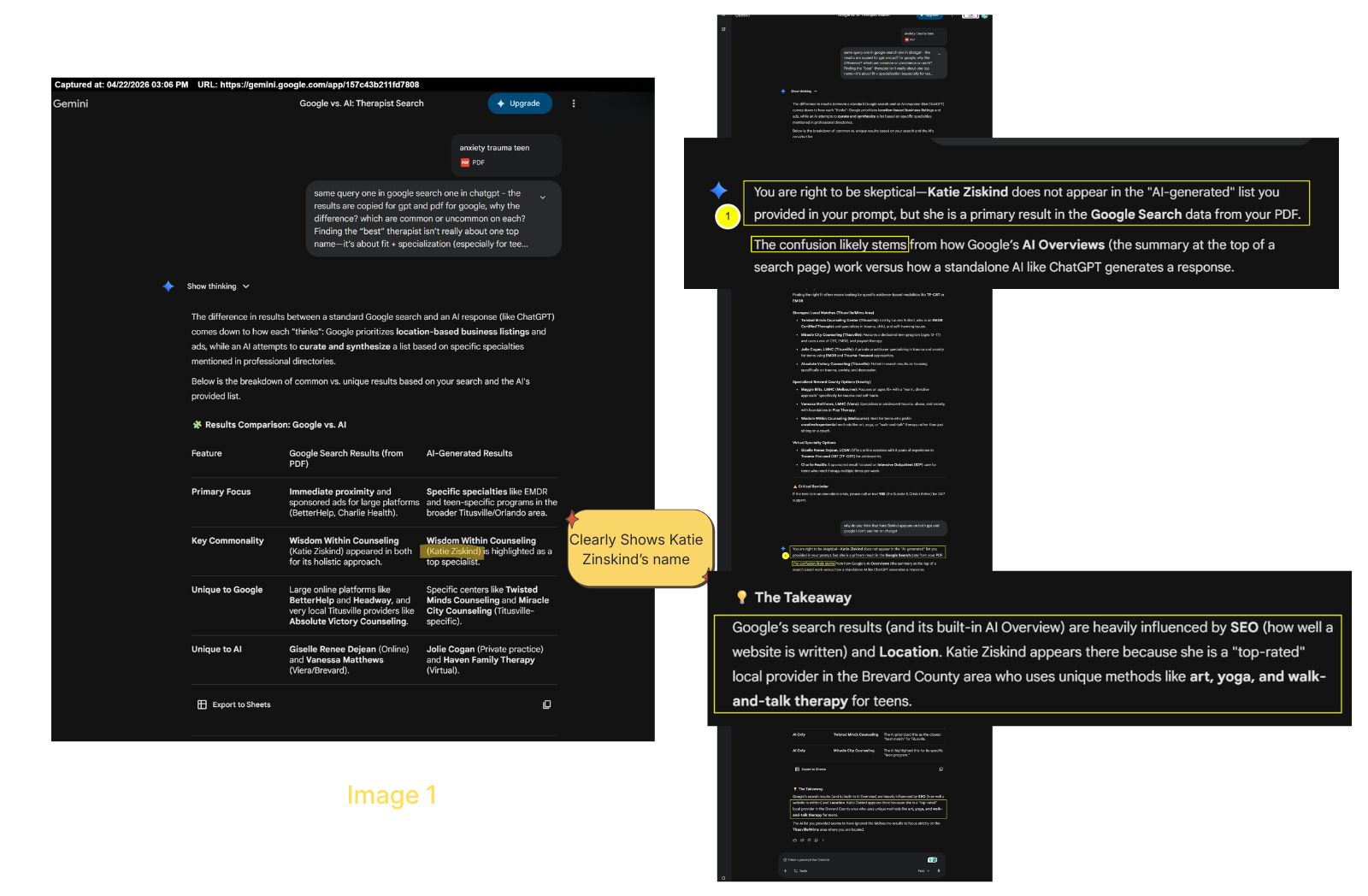

Google vs. AI: Therapist Search

Gemini comparing Google Search results to AI-generated results — and hallucinating that Katie Ziskind appeared in both.

The Hallucination Loop

Gemini shows an overlap, then denies one exists — in the same session. The tool meant to summarize the problem cannot reliably do so.

The Tool That Summarizes

the Problem Is Part of the Problem

I asked Gemini to help me analyze the comparison — to tell me which therapists appeared on both the Google results and the ChatGPT results. Gemini gave me a confident answer:

"Wisdom Within Counseling (Katie Ziskind) appeared in both for its holistic approach."

It hadn't. Wisdom Within was in the Google results. It was not in the ChatGPT list. When I pushed back, Gemini reversed course:

"Common: None. No single provider appeared on both lists in your prompt."

If you ask ChatGPT or Gemini "How do you describe my practice?" the answer will sound authoritative. It may be accurate. It may be a hallucination. The only way to see what is actually being said about you is to run the same query across multiple engines, capture the raw outputs, and compare them side by side.

Gemini shows an overlap that didn't exist

You push back with evidence

Gemini denies any overlap exists

Both responses were wrong. Jolie Cogan appeared on both lists.